Testing BRO: Results & Statistics

We set out to test whether the BRO algorithm could truly outsmart the natural league table at predicting final standings. To do this, we compared BRO-generated tables against the “natural” tables (the official standings at the time BRO was applied) and measured which offered the better forecast for each season.

Scope & Data

To focus on the most competitive and scheduling-challenged environments, we ran the test across the Three most important European leagues (as ranked by UEFA) — the English Premier League, La Liga and Serie A, spanning all seasons from 2003/04 through 2024/25 — together with the 2024-2025 UEFA Champions League, and the major US leagues: NBA, NFL, NHL and MLB spanning seasons ranging from 2016/17 through 2024/25. This resulted in a total of 125 league-seasons in our data set to directly compare the natural league table and the BRO league table.

Method: a simple, powerful comparison

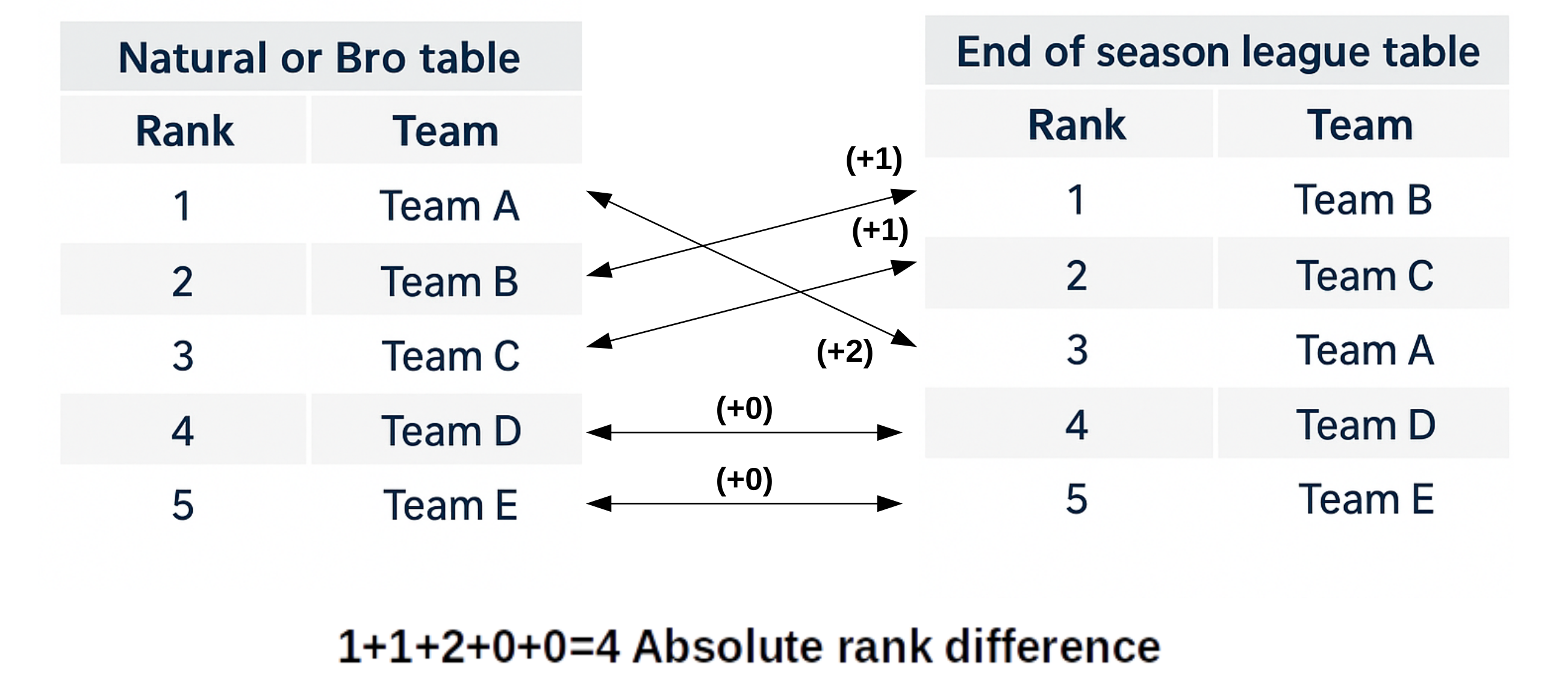

For every league-season, we computed the sum of absolute rank differences between each candidate table and the final league table.

Specifically we:

- Calculated the sum of absolute rank differences between the BRO-generated table and the final table.

- Calculated the sum of absolute rank differences between the natural (official) table and the final table.

- Subtracted the natural table’s score from the BRO table’s score to produce a bias value.

A negative bias indicates the BRO table predicts the final standings better than the natural table — and the more negative the value, the stronger the improvement.

Why the 33% mark?

We performed these comparisons at the 33% games-played point in each season. That checkpoint is ideal: enough matches have occurred to reveal early differences in team strength, but not so many that scheduling biases have already washed out. In other words, it’s the sweet spot where BRO’s corrections can matter most.

Statistical testing

To test whether observed differences were real (and not random noise), we ran two significance tests across the dataset:

- A one-tailed paired t-test (null hypothesis: BRO does not improve prediction)

- The Wilcoxon signed-rank test as a non-parametric confirmation

Both tests returned p-values < 0.05, allowing us to reject the null hypothesis and conclude the improvement is statistically significant.

The verdict 🏆

At the one-third season mark, the BRO algorithm consistently delivers league tables that are not only fairer but also statistically better predictors of the final standings than the natural table. The improvement is subtle but robust and consistent across the World's toughest competitions.

In short: BRO beats the table. It reveals hidden fairness and gives a clearer, more predictive snapshot of how teams are truly performing.

Note: This current evidence should be considered exploratory, since the endpoints were not specified a priori, leaving the multiplicity undefined. To move forward, once we settle on a final set of parameters as described here we need to establish a formal testing framework similar to a clinical trial, where the endpoints are pre-specified, thereby eliminating potential bias from multiplicity.